So, what is ChatGPT? And what does its existence mean for teachers and administrators?

In a nutshell, ChatGPT is an open-source AI application that creates human-like conversations, answers questions, and, perhaps most frighteningly of all, generates text that covers whatever topic users feed into it. Thankfully, the key word in that sentence is “human-like” and not “human,” a major differential that makes the app more of a Google-searched book report than some sort of Robo-Shakespeare. (For now, at least.)

Nevertheless, as with any tool on the Internet, the program can be misused. ELA students might try to use ChatGPT to pilfer story details meant to measure reading comprehension, if not operate it as an outright plagiarism machine. To that end, even though it doesn’t make french fries yet, the app can in fact generate code. And with issues like that in mind, here’s what teachers need to know about ChatGPT, the artificial intelligence that produces text.

Are Educators Concerned About ChatGPT?

Like a Facebook relationship status circa 2005, it’s complicated.

The app remains in its infancy, with the techno-geniuses behind the curtain busy collecting data and feedback for the inevitable paid version. So, at this point, there are more questions than answers, especially when it comes to the technology’s impact on education.

According to a recent report, which surveyed a little over 1,000 educators, several K–12 teachers have started using ChatGPT for a variety of tasks. The report states:

- 30% of teachers have used the app to plan lessons.

- 30% of teachers report using it to engineer fun and impactful classroom activities.

- 27% of teachers leverage the software to gain factual information for their teaching.

All in all, these stats represent a somewhat positive outlook on the app. To a big chunk of the educator community, this new-fangled text-manifestation machine represents another quality tool to add to their pedagogical arsenal.

Other teachers have a more dystopian view, seeing the app as a way to automate content that only appears legitimate at face value. These concerns are legitimate. More and more, our culture is falling prey to the possibility of taking inaccurate information as ironclad truth or looking at facts as obstacles to pursuing a predetermined agenda.

ChatGPT in Action

Let’s not mince words — in terms of space age performance, ChatGPT is pretty darn impressive. When you plug a prompt into its algorithm, the computer screen fills with fairly detailed sentences. It dives so deep into the topic that it makes attempts to speculate, going beyond what one finds at the first Google search result.

Despite the software’s impressive magic tricks, the question remains: How accurate is the information it spits out? Does it produce quality content? At a glance, the output appears as thoughtful, researched language. But there’s more than meets the eye.

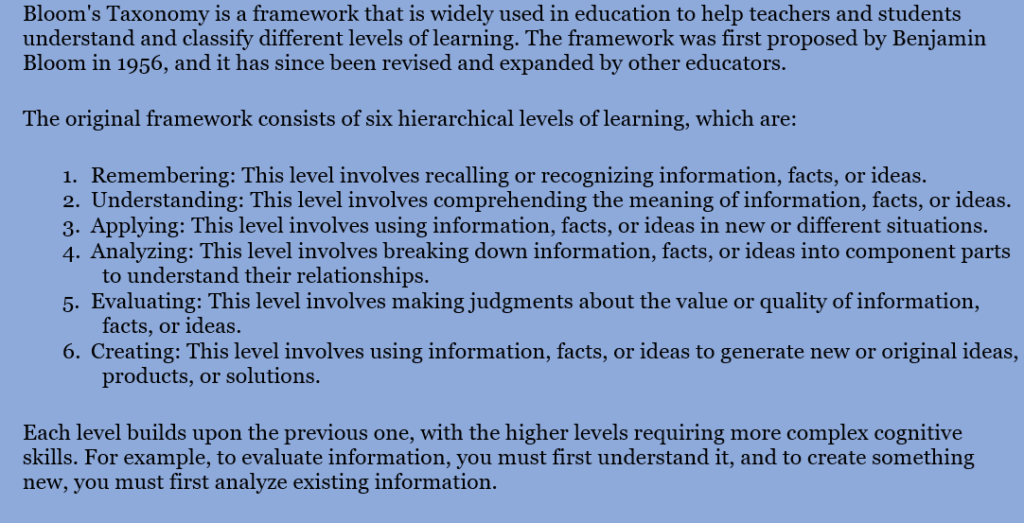

Here’s what happened when we asked the machine to define Bloom’s Taxonomy.

OK, not too shabby. But there’s a deal-breaker here. ChatGPT did a Google search, looked at multiple sources, and rehashed information available on Wikipedia. And, at least in our estimation, these results don’t reflect what it takes to compose a quality piece of writing.

Three Issues with ChatGPT and Student Achievement

As a team of educators, we see three major issues with ChatGPT and how it impacts student outcomes. And given the vital importance of ELA instruction, these concerns are not petty or inconsequential gripes.

ChatGPT Eschews the Writing Voice

We’ll be 100% honest with you. Dear reader, we had oodles of fun playing with ChatGPT. In one afternoon, we manifested Leonardo da Vinci’s biography in the voice of a dog and a description of A Midsummer Night’s Dream as written by Bart Simpson.

In creating wacky prompts, we noticed the machine developing a rhetorical habit. The content read like a Wikipedia article with insignificant personality details sprinkled throughout.

For example, when the dog-writer (whom we lovingly christened Bark Twain) told us about the most famous figure in the Renaissance Period, it would simply start or end its sentences with an unimaginative, predictable character nod. In this case, the text would include “woof woof” to designate the speaker as a canine. An actual dog might think about playing fetch with the Mona Lisa or snagging some bread off the Last Supper table.

While logic and factual accuracy stand as integral pillars in the temple of quality rhetoric and composition, a writer’s voice makes a piece loveable, memorable, and, in many cases, worth reading. Robots have traveled far since the Digesting Duck hit the streets in 1739, but these machines face a long road before becoming Kurt Vonnegut.

ChatGPT Spits Out Incorrect Information

Not everything on the Internet or television news reflects reality. In 2016, the delivery of misinformation became so mainstream that Oxford Dictionaries deemed “post-truth” as their word of the year. While technology has largely steered us toward greater enlightenment, the widespread usage means that honest, quality information sometimes flies out the window.

Let’s face it. ChatGPT doesn’t separate fact from fiction, at least not in a cogent way that weighs nuances and provides context. In other words, sometimes it dilutes crucial details. And sometimes it gets things dead wrong. Writing is about finding the truth, understanding the world, and becoming a more thoughtful human being, and no one can do that without good intel.

For instance, when we asked the program to describe our professional development services, the robot combed our website, teacher resources, course reviews, and other online material. It used that public information to form a semi-robust definition for what we do. It mentioned the flexibility and affordability (not bad). It mentioned state standards (true). The generated text also factored in an audience of K–12 educators, and made certain to mention their importance in society. Heck, the machine even had some unprompted kind words that flat-out endorsed our PD courses for teachers.

But the results were far from perfect. In fact, it touted that we work off a subscription model and ask teachers to choose between graduate credit and salary advancement. However, neither of those statements is true.

Are these malicious lies? Of course not. But any human with knowledge in the subject matter would catch these inaccuracies quite easily.

The writer Enrique Dans says it best: “If people will accept Google’s word uncritically, imagine the response to ChatGPT. The answer to your search may be 100% bull [expletive], but whatever: for many people, it’s hard, reliable truth.”

Given that the app bases its logic and content on what it finds in cyberspace, which sometimes strays beyond the realm of accuracy, it’s only natural that its machine brain gets disoriented.

ChatGPT Has No Critical Thinking Skills

All of this hullabaloo leads us to the nucleus of academic writing, which is critical thinking. Yes, it’s a loaded definition with intricacies aplenty. But we know beyond doubt that the critical thinking prowess stretches beyond understanding information, weighing pros and concerns, making thoughtful speculations, and forming strong arguments.

No, critical thinking requires humanity. Robots epitomize the antithesis of this ideal.

While the machine mimics language and performs moderate research, it fails to factor in the transdisciplinary and lifelong skills of critical thinking and human empathy. It can spoon-feed its end user information the way that Google and Wikipedia do, but it can’t arrive at logical conclusions or factor in emotional elements that only happen through the lens of personal experience.

Really, it’s all a matter of love and understanding mixed with logic and goodwill. And despite the fact that the Terminator learned the concept of human connection at the end of the second movie, the machine never figured out how to truly experience it.