- Courses

- State Requirements

- Alabama

- Alaska

- Arizona

- Arkansas

- California

- Colorado

- Connecticut

- Delaware

- District of Columbia

- Florida

- Georgia

- Hawaii

- Idaho

- Illinois

- Indiana

- Iowa

- Kansas

- Kentucky

- Louisiana

- Maine

- Maryland

- Massachusetts

- Michigan

- Minnesota

- Mississippi

- Missouri

- Montana

- Nebraska

- Nevada

- New Hampshire

- New Jersey

- New Mexico

- New York

- New York City

- North Carolina

- North Dakota

- Ohio

- Oklahoma

- Oregon

- Pennsylvania

- Rhode Island

- South Carolina

- South Dakota

- Tennessee

- Texas

- Utah

- Vermont

- Virginia

- Washington

- West Virginia

- Wisconsin

- Wyoming

- Groups

- Professional Development

- Video Platform

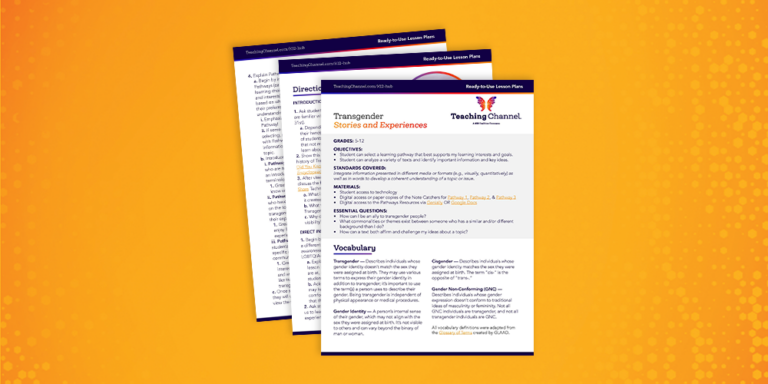

- Resources

- Courses

- State Requirements

- Alabama

- Alaska

- Arizona

- Arkansas

- California

- Colorado

- Connecticut

- Delaware

- District of Columbia

- Florida

- Georgia

- Hawaii

- Idaho

- Illinois

- Indiana

- Iowa

- Kansas

- Kentucky

- Louisiana

- Maine

- Maryland

- Massachusetts

- Michigan

- Minnesota

- Mississippi

- Missouri

- Montana

- Nebraska

- Nevada

- New Hampshire

- New Jersey

- New Mexico

- New York

- New York City

- North Carolina

- North Dakota

- Ohio

- Oklahoma

- Oregon

- Pennsylvania

- Rhode Island

- South Carolina

- South Dakota

- Tennessee

- Texas

- Utah

- Vermont

- Virginia

- Washington

- West Virginia

- Wisconsin

- Wyoming

- Groups

- Professional Development

- Video Platform

- Resources